graph LR

A["Query"] --> B["Embed"]

B --> C["Retrieve<br/>Top-k"]

C --> D["Generate"]

D --> E["Answer"]

style A fill:#4a90d9,color:#fff,stroke:#333

style B fill:#9b59b6,color:#fff,stroke:#333

style C fill:#e67e22,color:#fff,stroke:#333

style D fill:#C8CFEA,color:#fff,stroke:#333

style E fill:#1abc9c,color:#fff,stroke:#333

Agentic RAG: When Retrieval Needs Reasoning

Building RAG agents that plan queries, route to tools, self-reflect, and iteratively refine answers with LangGraph and LlamaIndex Workflows

Keywords: Agentic RAG, Self-RAG, Corrective RAG, CRAG, LangGraph, LlamaIndex, FunctionAgent, AgentWorkflow, query planning, tool routing, self-reflection, retrieval grading, adaptive retrieval, multi-step reasoning, state machine, ReAct, agent

Introduction

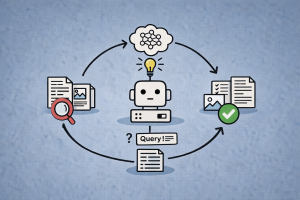

Standard RAG follows a fixed linear pipeline: embed the query, retrieve top-k chunks, pass them to an LLM, generate an answer. This works well for straightforward factual questions — “What is the default timeout?” — where a single retrieval pass finds the right context. But many real-world questions require more.

Consider: “Compare the latency and cost trade-offs of deploying model X with vLLM vs. Ollama, and recommend which to use for our batch inference workload.” This question needs the system to:

- Decompose the query into sub-questions (latency of X on vLLM, cost of X on vLLM, same for Ollama, batch inference requirements)

- Route each sub-question to the right data source (deployment docs, pricing data, benchmark results)

- Evaluate whether retrieved documents actually answer each sub-question

- Re-try with a rewritten query if retrieval fails

- Synthesize a comparative answer from multiple retrieval results

A fixed pipeline cannot do any of this. It retrieves once, generates once, and hopes for the best. Agentic RAG replaces this pipeline with an autonomous agent that reasons about what to retrieve, evaluates what it finds, and iteratively refines until it has a satisfactory answer.

This article covers the progression from naive RAG to fully agentic retrieval: the four agentic design patterns (reflection, planning, tool use, multi-agent collaboration), key research papers (Self-RAG, CRAG), and hands-on implementations with LangGraph and LlamaIndex.

Why Standard RAG Breaks Down

The Single-Shot Retrieval Problem

Standard RAG makes a critical assumption: one retrieval pass is sufficient. The query is embedded, the top-k most similar chunks are returned, and the LLM generates from whatever it gets. There is no feedback loop.

This fails in several predictable ways:

| Failure Mode | Example | Root Cause |

|---|---|---|

| Irrelevant retrieval | Query about “Python decorators” retrieves docs about “Python snakes” | Embedding ambiguity, no relevance check |

| Incomplete retrieval | Multi-part question, only first part answered | Single query can’t capture all facets |

| Wrong data source | Question needs SQL data but system only searches vector store | No routing between tools |

| Stale or missing data | “What’s the latest version?” retrieves outdated chunk | No fallback to web search or other sources |

| Hallucination from noise | LLM generates confidently from marginally relevant chunks | No grounding check on retrieved context |

From Pipelines to Agents

The solution is to give the retrieval system the ability to reason about its own process. Instead of a fixed pipeline, we build a state machine (or agent loop) where an LLM makes decisions at each step:

graph TD

A["User Query"] --> B{"Need<br/>Retrieval?"}

B -->|Yes| C["Plan: Decompose<br/>into Sub-queries"]

B -->|No| G["Generate<br/>from Knowledge"]

C --> D["Route: Select<br/>Tool per Sub-query"]

D --> E["Retrieve from<br/>Selected Source"]

E --> F{"Documents<br/>Relevant?"}

F -->|Yes| H["Generate<br/>Answer"]

F -->|No| I["Rewrite Query<br/>& Re-retrieve"]

I --> E

H --> J{"Answer<br/>Sufficient?"}

J -->|Yes| K["Return<br/>Final Answer"]

J -->|No| C

style A fill:#4a90d9,color:#fff,stroke:#333

style B fill:#f5a623,color:#fff,stroke:#333

style C fill:#9b59b6,color:#fff,stroke:#333

style D fill:#27ae60,color:#fff,stroke:#333

style E fill:#e67e22,color:#fff,stroke:#333

style F fill:#f5a623,color:#fff,stroke:#333

style G fill:#C8CFEA,color:#fff,stroke:#333

style H fill:#C8CFEA,color:#fff,stroke:#333

style I fill:#e74c3c,color:#fff,stroke:#333

style J fill:#f5a623,color:#fff,stroke:#333

style K fill:#1abc9c,color:#fff,stroke:#333

This is Agentic RAG: retrieval augmented generation where an autonomous agent controls the retrieval process using reasoning, planning, tool selection, and self-reflection.

The Four Agentic Design Patterns

The survey by Singh et al. (2025) identifies four core design patterns that transform static RAG into agentic RAG. These patterns can be composed — most production agentic RAG systems use multiple patterns together.

1. Reflection

The agent evaluates its own outputs and decides whether to accept or retry. This is the most impactful pattern for RAG quality.

Applied to RAG:

- Retrieval grading: Are the retrieved documents actually relevant to the query?

- Hallucination detection: Is the generated answer grounded in the retrieved context?

- Answer quality check: Does the answer actually address the user’s question?

graph LR

A["Retrieve"] --> B["Grade<br/>Documents"]

B -->|Relevant| C["Generate"]

B -->|Irrelevant| D["Rewrite Query"]

D --> A

C --> E["Grade<br/>Generation"]

E -->|Grounded| F["Return Answer"]

E -->|Hallucination| C

style A fill:#e67e22,color:#fff,stroke:#333

style B fill:#f5a623,color:#fff,stroke:#333

style C fill:#C8CFEA,color:#fff,stroke:#333

style D fill:#e74c3c,color:#fff,stroke:#333

style E fill:#f5a623,color:#fff,stroke:#333

style F fill:#1abc9c,color:#fff,stroke:#333

2. Planning

The agent decomposes complex queries into sub-tasks before retrieving. Instead of a single monolithic query, it creates a retrieval plan.

Applied to RAG:

- Break “Compare X and Y on dimensions A, B, C” into separate sub-queries

- Identify which sub-queries need retrieval and which can be answered from prior context

- Sequence sub-queries when later ones depend on earlier results

3. Tool Use

The agent selects the right tool for each retrieval task. Tools might include:

- Vector search over different indices

- SQL queries against structured databases

- Web search for current information

- Knowledge graph traversal for relational queries

- API calls to external services

- Calculator or code execution for computational questions

4. Multi-Agent Collaboration

Multiple specialized agents work together. For example:

- A router agent decides which specialist to invoke

- A retrieval agent handles document search

- A synthesis agent combines results into a coherent answer

- A fact-check agent verifies claims against sources

| Pattern | Key Capability | RAG Application | Complexity |

|---|---|---|---|

| Reflection | Self-evaluate and retry | Grade retrieval, detect hallucination | Low |

| Planning | Decompose and sequence | Multi-part queries, sub-question generation | Medium |

| Tool Use | Select appropriate tool | Route to vector/SQL/web/graph | Medium |

| Multi-Agent | Specialize and coordinate | Complex workflows, parallel retrieval | High |

Key Research: Self-RAG and Corrective RAG

Two papers have been particularly influential in shaping how agentic RAG is implemented in practice.

Self-RAG (Asai et al., 2023)

Core idea: Train the LLM to generate reflection tokens that control the RAG process at inference time.

Self-RAG introduces four special tokens:

| Token | Decision | Input | Output |

|---|---|---|---|

| Retrieve | Should I retrieve documents? | Question (+ generation) | yes, no, continue |

| ISREL | Is this document relevant? | Question + document | relevant, irrelevant |

| ISSUP | Is the generation supported? | Question + document + generation | fully supported, partial, no support |

| ISUSE | Is the generation useful? | Question + generation | 5-point scale |

The flow: the model decides whether to retrieve, grades each retrieved document for relevance, generates an answer, then checks whether the answer is supported by the documents and useful to the original question. If any check fails, it loops back.

Key insight: By making retrieval adaptive (only retrieve when needed) and adding self-critique (check relevance, support, and usefulness), Self-RAG significantly outperforms both standard RAG and ChatGPT on open-domain QA, reasoning, and fact verification.

Corrective RAG — CRAG (Yan et al., 2024)

Core idea: Add a retrieval evaluator that assesses document quality and triggers corrective actions.

CRAG’s flow:

- Retrieve documents from the vector store

- A lightweight evaluator scores each document’s relevance (Correct / Ambiguous / Incorrect)

- Based on scores:

- Correct: Proceed to generation with knowledge refinement

- Ambiguous: Supplement with web search results

- Incorrect: Discard vector results, fall back entirely to web search

- Knowledge refinement: Decompose documents into “knowledge strips”, grade each strip, filter out irrelevant ones

Key insight: CRAG treats retrieval failure as expected rather than exceptional. By planning for failure — with web search fallback and knowledge strip filtering — it makes RAG robust to noisy or incomplete retrieval.

Implementing Agentic RAG with LangGraph

LangGraph models computation as a state graph: nodes are functions that process state, edges define transitions (including conditional edges based on LLM decisions), and state flows through the graph with each step. This maps naturally to agentic RAG, where each decision point (grade documents? rewrite query? search the web?) is a conditional edge.

Architecture: Corrective RAG with LangGraph

graph TD

A["__start__"] --> B["retrieve"]

B --> C["grade_documents"]

C --> D{"Any Relevant<br/>Documents?"}

D -->|Yes| E["generate"]

D -->|No| F["rewrite_query"]

F --> G["web_search"]

G --> E

E --> H{"Hallucination<br/>Check"}

H -->|Grounded| I{"Answers<br/>Question?"}

H -->|Not Grounded| E

I -->|Yes| J["__end__"]

I -->|No| F

style A fill:#4a90d9,color:#fff,stroke:#333

style B fill:#e67e22,color:#fff,stroke:#333

style C fill:#f5a623,color:#fff,stroke:#333

style D fill:#f5a623,color:#fff,stroke:#333

style E fill:#C8CFEA,color:#fff,stroke:#333

style F fill:#e74c3c,color:#fff,stroke:#333

style G fill:#9b59b6,color:#fff,stroke:#333

style H fill:#f5a623,color:#fff,stroke:#333

style I fill:#f5a623,color:#fff,stroke:#333

style J fill:#1abc9c,color:#fff,stroke:#333

Step 1: Define the State

from typing import Annotated, Literal

from typing_extensions import TypedDict

from langgraph.graph.message import add_messages

class AgentState(TypedDict):

"""State that flows through the agentic RAG graph."""

question: str

documents: list[str]

generation: str

web_search_needed: bool

retry_count: intStep 2: Build the Retriever

from langchain_openai import OpenAIEmbeddings

from langchain_community.vectorstores import FAISS

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_community.document_loaders import DirectoryLoader, PyPDFLoader

# Index documents

loader = DirectoryLoader("./data", glob="**/*.pdf", loader_cls=PyPDFLoader)

docs = loader.load()

splitter = RecursiveCharacterTextSplitter(chunk_size=512, chunk_overlap=50)

chunks = splitter.split_documents(docs)

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

vectorstore = FAISS.from_documents(chunks, embeddings)

retriever = vectorstore.as_retriever(search_kwargs={"k": 5})Step 3: Define the Graph Nodes

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from pydantic import BaseModel, Field

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# --- Node: Retrieve ---

def retrieve(state: AgentState) -> AgentState:

"""Retrieve documents from vector store."""

question = state["question"]

documents = retriever.invoke(question)

return {

**state,

"documents": [doc.page_content for doc in documents],

}

# --- Node: Grade Documents ---

class RelevanceGrade(BaseModel):

"""Binary relevance grade for a retrieved document."""

is_relevant: bool = Field(

description="Whether the document is relevant to the question"

)

grader_llm = llm.with_structured_output(RelevanceGrade)

GRADE_PROMPT = ChatPromptTemplate.from_messages([

("system", "You are a grader assessing whether a retrieved document "

"is relevant to a user question. Answer with is_relevant=true or false."),

("human", "Document:\n{document}\n\nQuestion: {question}"),

])

grader_chain = GRADE_PROMPT | grader_llm

def grade_documents(state: AgentState) -> AgentState:

"""Grade each retrieved document for relevance."""

question = state["question"]

documents = state["documents"]

relevant_docs = []

for doc in documents:

grade = grader_chain.invoke(

{"document": doc, "question": question}

)

if grade.is_relevant:

relevant_docs.append(doc)

return {

**state,

"documents": relevant_docs,

"web_search_needed": len(relevant_docs) == 0,

}

# --- Node: Rewrite Query ---

REWRITE_PROMPT = ChatPromptTemplate.from_messages([

("system", "You are a query rewriter. Given a question that did not "

"retrieve good results, rewrite it to be more specific and "

"search-friendly. Return only the rewritten question."),

("human", "Original question: {question}"),

])

rewrite_chain = REWRITE_PROMPT | llm | StrOutputParser()

def rewrite_query(state: AgentState) -> AgentState:

"""Rewrite the query for better retrieval."""

new_question = rewrite_chain.invoke(

{"question": state["question"]}

)

return {

**state,

"question": new_question,

"retry_count": state.get("retry_count", 0) + 1,

}

# --- Node: Web Search ---

from langchain_community.tools.tavily_search import TavilySearchResults

web_search_tool = TavilySearchResults(max_results=3)

def web_search(state: AgentState) -> AgentState:

"""Supplement retrieval with web search results."""

question = state["question"]

results = web_search_tool.invoke({"query": question})

web_docs = [r["content"] for r in results]

return {

**state,

"documents": state["documents"] + web_docs,

}

# --- Node: Generate ---

RAG_PROMPT = ChatPromptTemplate.from_messages([

("system", "You are an assistant answering questions based on "

"provided context. Answer only from the context. If the context "

"is insufficient, say so."),

("human", "Context:\n{context}\n\nQuestion: {question}"),

])

generate_chain = RAG_PROMPT | llm | StrOutputParser()

def generate(state: AgentState) -> AgentState:

"""Generate an answer from retrieved documents."""

context = "\n\n".join(state["documents"])

generation = generate_chain.invoke(

{"context": context, "question": state["question"]}

)

return {**state, "generation": generation}Step 4: Define Conditional Edges (Graders)

class GradeHallucination(BaseModel):

"""Check if generation is grounded in documents."""

is_grounded: bool = Field(

description="Whether the answer is grounded in the provided documents"

)

class GradeAnswer(BaseModel):

"""Check if generation answers the question."""

answers_question: bool = Field(

description="Whether the answer addresses the user's question"

)

hallucination_grader = llm.with_structured_output(GradeHallucination)

answer_grader = llm.with_structured_output(GradeAnswer)

def should_search_web(state: AgentState) -> Literal["web_search", "generate"]:

"""Route based on document relevance."""

if state["web_search_needed"]:

return "web_search"

return "generate"

def check_generation(state: AgentState) -> Literal["end", "rewrite_query", "generate"]:

"""Check if generation is grounded and answers the question."""

# Limit retries to prevent infinite loops

if state.get("retry_count", 0) >= 3:

return "end"

# Check hallucination

context = "\n\n".join(state["documents"])

hallucination = hallucination_grader.invoke(

{"messages": [

{"role": "system", "content": "Check if the answer is grounded "

"in the provided documents."},

{"role": "human", "content": f"Documents:\n{context}\n\n"

f"Answer: {state['generation']}"},

]}

)

if not hallucination.is_grounded:

return "generate" # Re-generate

# Check if it answers the question

answer_check = answer_grader.invoke(

{"messages": [

{"role": "system", "content": "Check if the answer addresses "

"the user's question."},

{"role": "human", "content": f"Question: {state['question']}\n\n"

f"Answer: {state['generation']}"},

]}

)

if not answer_check.answers_question:

return "rewrite_query" # Try different query

return "end"Step 5: Build and Run the Graph

from langgraph.graph import StateGraph, END

# Build the graph

workflow = StateGraph(AgentState)

# Add nodes

workflow.add_node("retrieve", retrieve)

workflow.add_node("grade_documents", grade_documents)

workflow.add_node("rewrite_query", rewrite_query)

workflow.add_node("web_search", web_search)

workflow.add_node("generate", generate)

# Set entry point

workflow.set_entry_point("retrieve")

# Add edges

workflow.add_edge("retrieve", "grade_documents")

workflow.add_conditional_edges(

"grade_documents",

should_search_web,

{"web_search": "web_search", "generate": "generate"},

)

workflow.add_edge("web_search", "generate")

workflow.add_conditional_edges(

"generate",

check_generation,

{"end": END, "rewrite_query": "rewrite_query", "generate": "generate"},

)

workflow.add_edge("rewrite_query", "retrieve")

# Compile

app = workflow.compile()

# Run

result = app.invoke({

"question": "What are the key differences between RLHF and DPO?",

"documents": [],

"generation": "",

"web_search_needed": False,

"retry_count": 0,

})

print(result["generation"])Adding Tool Routing

Extend the graph to route queries to different tools:

class RouteQuery(BaseModel):

"""Route a query to the most appropriate data source."""

source: Literal["vectorstore", "web_search", "sql_database"] = Field(

description="The data source to route the query to"

)

router_llm = llm.with_structured_output(RouteQuery)

ROUTE_PROMPT = ChatPromptTemplate.from_messages([

("system",

"You are a query router. Route the query to the best data source:\n"

"- vectorstore: for questions about internal documentation\n"

"- web_search: for questions about recent events or general knowledge\n"

"- sql_database: for questions requiring counts, aggregations, or "

"structured data lookups"),

("human", "{question}"),

])

router_chain = ROUTE_PROMPT | router_llm

def route_question(state: AgentState) -> Literal[

"retrieve", "web_search", "sql_query"

]:

"""Route question to the appropriate tool."""

result = router_chain.invoke({"question": state["question"]})

return {

"vectorstore": "retrieve",

"web_search": "web_search",

"sql_database": "sql_query",

}[result.source]Implementing Agentic RAG with LlamaIndex

LlamaIndex provides two paths for agentic RAG: FunctionAgent for tool-calling agents, and Workflows for custom state machines.

Approach 1: FunctionAgent with RAG Tools

The simplest approach — wrap your RAG query engines as tools that an agent can invoke:

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings

from llama_index.core.tools import QueryEngineTool

from llama_index.core.agent.workflow import FunctionAgent

from llama_index.embeddings.openai import OpenAIEmbedding

from llama_index.llms.openai import OpenAI

Settings.embed_model = OpenAIEmbedding(model="text-embedding-3-small")

Settings.llm = OpenAI(model="gpt-4o-mini", temperature=0)

# Build separate indices for different document collections

docs_api = SimpleDirectoryReader("./data/api_docs").load_data()

docs_guides = SimpleDirectoryReader("./data/guides").load_data()

index_api = VectorStoreIndex.from_documents(docs_api)

index_guides = VectorStoreIndex.from_documents(docs_guides)

# Create query engines

engine_api = index_api.as_query_engine(similarity_top_k=5)

engine_guides = index_guides.as_query_engine(similarity_top_k=5)

# Wrap as tools with descriptions (agent uses these to decide routing)

tools = [

QueryEngineTool.from_defaults(

query_engine=engine_api,

name="api_docs",

description="Search API reference documentation. Use for questions "

"about function signatures, parameters, return types, and endpoints.",

),

QueryEngineTool.from_defaults(

query_engine=engine_guides,

name="user_guides",

description="Search user guides and tutorials. Use for how-to "

"questions, architecture overviews, and best practices.",

),

]

# Create agentic RAG

agent = FunctionAgent(

tools=tools,

llm=OpenAI(model="gpt-4o", temperature=0),

system_prompt=(

"You are a helpful assistant that answers questions about our "

"platform. Use the available tools to search relevant documentation. "

"If one tool doesn't return useful results, try the other. "

"Always cite which source you used."

),

)

# Run

response = await agent.run(

user_msg="How do I authenticate API requests? Show me an example."

)

print(response)The agent will:

- Read the tool descriptions and decide which to call

- Call the tool (which runs vector retrieval internally)

- Evaluate the response and optionally call another tool

- Synthesize a final answer from all gathered context

Approach 2: Multi-Index Router Agent

For more indices, use a router that dynamically selects the best one:

from llama_index.core.tools import QueryEngineTool

from llama_index.core.agent.workflow import FunctionAgent

# Assume we have multiple indices

indices = {

"deployment": index_deployment,

"api": index_api,

"tutorials": index_tutorials,

"faq": index_faq,

}

tools = [

QueryEngineTool.from_defaults(

query_engine=idx.as_query_engine(similarity_top_k=5),

name=name,

description=f"Search the {name} documentation index.",

)

for name, idx in indices.items()

]

# Add a web search tool

from llama_index.tools.tavily_research import TavilyToolSpec

web_tool = TavilyToolSpec(api_key="your-key").to_tool_list()[0]

tools.append(web_tool)

agent = FunctionAgent(

tools=tools,

llm=OpenAI(model="gpt-4o", temperature=0),

system_prompt=(

"You are a documentation assistant with access to multiple "

"knowledge bases and web search. For each question:\n"

"1. Determine which tool(s) are most relevant\n"

"2. Search the most likely source first\n"

"3. If results are insufficient, try other tools\n"

"4. Use web search only as a last resort\n"

"5. Synthesize a complete answer with source attribution"

),

)Approach 3: Sub-Question Query Engine

LlamaIndex’s SubQuestionQueryEngine automatically decomposes complex queries:

from llama_index.core.query_engine import SubQuestionQueryEngine

from llama_index.core.tools import QueryEngineTool

# Create tools from different indices

query_engine_tools = [

QueryEngineTool.from_defaults(

query_engine=index_deployment.as_query_engine(),

name="deployment_docs",

description="Documentation about deploying and serving ML models",

),

QueryEngineTool.from_defaults(

query_engine=index_pricing.as_query_engine(),

name="pricing_docs",

description="Pricing and cost information for different services",

),

]

# SubQuestionQueryEngine decomposes the query automatically

sub_question_engine = SubQuestionQueryEngine.from_defaults(

query_engine_tools=query_engine_tools,

llm=OpenAI(model="gpt-4o-mini", temperature=0),

)

# Complex question → automatically decomposed into sub-questions

response = sub_question_engine.query(

"Compare the cost and latency of deploying Llama 3 with vLLM vs Ollama"

)

print(response)

# Internally generates:

# Sub-question 1: "What is the cost of deploying Llama 3 with vLLM?"

# Sub-question 2: "What is the latency of deploying Llama 3 with vLLM?"

# Sub-question 3: "What is the cost of deploying Llama 3 with Ollama?"

# Sub-question 4: "What is the latency of deploying Llama 3 with Ollama?"Agentic RAG Patterns Comparison

Progression from Simple to Agentic

| Level | Pattern | Description | Implementation |

|---|---|---|---|

| 0 | Naive RAG | Retrieve once → generate | Fixed chain |

| 1 | Query routing | Route to best retriever | Conditional edge |

| 2 | Corrective RAG | Grade retrieval → retry if bad | State graph with loop |

| 3 | Self-reflective RAG | Grade generation → rewrite if wrong | State graph with double loop |

| 4 | Adaptive RAG | Decide whether to retrieve at all | Agent with retrieval as optional tool |

| 5 | Multi-tool agent | Route across vector/SQL/web/graph | Agent with multiple tools |

| 6 | Multi-agent | Specialized agents collaborate | AgentWorkflow / LangGraph multi-agent |

LangGraph vs. LlamaIndex for Agentic RAG

| Aspect | LangGraph | LlamaIndex Agents |

|---|---|---|

| Paradigm | Explicit state graph (nodes + edges) | Tool-calling agent loop |

| Control flow | You define every transition and condition | Agent decides tool order autonomously |

| Visibility | Full graph visualization, step-by-step traces | Tool call logs, event streaming |

| Flexibility | Maximum — any graph topology | High — customizable via Workflows |

| Complexity | Higher — more code for explicit control | Lower — declarative tool definitions |

| Best for | Custom flows with specific decision logic | Tool routing, multi-index search |

| Self-reflection | Explicit grading nodes + conditional edges | Agent system prompt + retry logic |

| State management | Built-in TypedDict state, persistence |

Context/state via agent memory |

Use LangGraph when you need precise control over the decision flow — when you want to define exactly what happens after document grading, what triggers web search, how many retries are allowed.

Use LlamaIndex agents when your primary need is routing across multiple data sources and you want the LLM to autonomously decide the retrieval strategy.

Production Considerations

1. Preventing Infinite Loops

Agentic RAG involves loops (rewrite → retrieve → grade → rewrite…). Without limits, the system can loop forever on ambiguous queries.

# Always add a retry counter to your state

MAX_RETRIES = 3

def check_generation(state: AgentState) -> str:

if state.get("retry_count", 0) >= MAX_RETRIES:

return "end" # Bail out with best available answer

# ... grading logic ...2. Latency vs. Quality Trade-off

Each agentic step adds an LLM call. A self-reflective RAG pipeline with grading can make 5–10 LLM calls per query vs. 1 for standard RAG.

| Strategy | LLM Calls per Query | Latency | Quality |

|---|---|---|---|

| Standard RAG | 1 | Low | Baseline |

| + Document grading | 1 + k (grading) | Medium | Better |

| + Query rewrite + re-retrieval | 3–5 | Higher | Much better |

| Full self-reflective | 5–10 | High | Best |

Mitigation strategies:

- Use cheaper/faster models (GPT-4o-mini) for grading steps, stronger models (GPT-4o) for final generation

- Run document grading in parallel (grade all k documents simultaneously)

- Cache common queries and their retrieval results

- Set tight timeouts on each step

3. Observability and Debugging

Agentic flows are harder to debug than linear pipelines. Each query takes a different path through the graph.

# LangGraph: Enable tracing with LangSmith

import os

os.environ["LANGCHAIN_TRACING_V2"] = "true"

os.environ["LANGCHAIN_PROJECT"] = "agentic-rag"

# Every node execution, conditional edge decision, and LLM call

# is logged with full input/output# LlamaIndex: Use event streaming

from llama_index.core.agent.workflow import FunctionAgent

agent = FunctionAgent(tools=tools, llm=llm)

handler = agent.run(user_msg="query")

async for event in handler.stream_events():

print(f"[{event.__class__.__name__}] {event}")

response = await handlerFor comprehensive observability patterns, see Observability for Multi-Turn LLM Conversations.

4. Evaluation

Agentic RAG needs evaluation at two levels: retrieval quality and agent decision quality.

| Metric | What It Measures | Level |

|---|---|---|

| Recall@k | Were the right documents retrieved? | Retrieval |

| Answer Faithfulness | Is the answer grounded in context? | Generation |

| Answer Relevance | Does the answer address the question? | Generation |

| Grader Accuracy | Did the relevance grader make correct decisions? | Agent |

| Routing Accuracy | Did the router select the right tool? | Agent |

| Retry Efficiency | How often do retries improve the answer? | Agent |

| Avg. Steps per Query | How many agent steps before completion? | Efficiency |

5. When NOT to Use Agentic RAG

Agentic RAG adds complexity and latency. Don’t use it when:

- Queries are simple and domain-specific — standard RAG with good chunking and reranking suffices

- Latency is critical — each agent step adds 200–500ms

- Your corpus is small and homogeneous — routing between sources isn’t needed

- Budget is tight — 5–10x more LLM calls per query

Start simple: build a solid standard RAG pipeline first (good chunking, hybrid search, reranking), then add agentic patterns only where evaluation shows specific failures.

Summary

| Concept | Key Takeaway |

|---|---|

| Standard RAG limitation | Single-shot retrieval fails on complex, multi-part, or ambiguous queries |

| Agentic design patterns | Reflection, planning, tool use, multi-agent collaboration |

| Self-RAG | Adaptive retrieval + reflection tokens for grounding and usefulness checks |

| Corrective RAG (CRAG) | Retrieval evaluator + web search fallback + knowledge strip filtering |

| LangGraph | Explicit state graphs with conditional edges for precise control flow |

| LlamaIndex agents | FunctionAgent with QueryEngineTools for autonomous tool routing |

| Sub-question decomposition | Automatically break complex queries into retrievable sub-questions |

| Production | Limit retries, use cheap models for grading, trace every step, evaluate agent decisions |

| Key principle | Start with simple RAG, add agentic patterns only where evaluation shows failures |

Agentic RAG is the natural evolution of retrieval-augmented generation: from a fixed pipeline to an adaptive system that reasons about what to retrieve, evaluates what it finds, and iterates until it gets it right. The tools — LangGraph for explicit graphs, LlamaIndex agents for autonomous routing — make this practical to implement today.

For the foundational pipeline these agents enhance, see Building a RAG Pipeline from Scratch. For the chunking strategies that feed retrieval, see Advanced Chunking Strategies for RAG. For embedding and reranking within retrieval tools, see Embedding Models and Reranking for RAG. For graph-based retrieval as an agent tool, see GraphRAG: Knowledge Graphs Meet Retrieval-Augmented Generation.

References

- Singh, Ehtesham, Kumar & Khoei, Agentic Retrieval-Augmented Generation: A Survey on Agentic RAG, 2025. arXiv:2501.09136

- Asai, Wu, Wang, Sil & Hajishirzi, Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection, 2023. arXiv:2310.11511

- Yan, Gu, Zhu & Ling, Corrective Retrieval Augmented Generation, 2024. arXiv:2401.15884

- LangChain Blog, Self-Reflective RAG with LangGraph, 2024. Blog

- LlamaIndex Documentation, Building an Agent, 2026. Docs

- LangGraph Documentation, Agentic RAG Tutorial, 2026. Docs

Read More

- Add evaluation metrics to measure whether agent decisions actually improve answer quality.

- Extend your agents with multimodal retrieval tools for images, tables, and PDF documents.

- Explore hybrid and corrective RAG architectures like Self-RAG and CRAG for self-correcting retrieval loops.

- Scale agentic pipelines to production with caching, routing, and observability.